AI UX Engineering·22 min read·March 23, 2026

The Architecture of Undo: Building Reversible AI Actions in Production Systems

Your AI agent just booked the wrong flight, sent a premature email, and modified a production database. Ctrl-Z does not work here. Reversible AI actions require event sourcing, compensating transactions, and an entirely new engineering discipline for undoing the real world.

Viktor BezdekEngineering / Product Leadership

The AI assistant was supposed to help. The user said 'reschedule my dentist appointment to next Thursday.' The AI interpreted 'next Thursday' as March 26 instead of April 2 — a common ambiguity that trips up both humans and machines. It accessed the dental office's booking API, cancelled the existing appointment, and booked a new one for the wrong date. It sent a confirmation to the dental office. It updated the user's calendar. It sent a reminder notification to the user's partner. By the time the user noticed the error, four systems had been modified, two people had been notified, and the original time slot was no longer available because someone else booked it.

The user hits undo. Nothing happens. There is no undo. The AI interface has a beautiful chat history showing every step it took, but not a single step is reversible through the interface. The user must now manually call the dental office, explain the error, hope the original slot is still available, update their calendar, and notify their partner. The AI created a multi-system state change in seconds. Unwinding it takes thirty minutes of human effort. This is the undo gap — and it is the single most dangerous UX failure in agentic AI systems.

Why Traditional Undo Fails for AI

Undo in traditional software is well-understood. A text editor maintains a stack of operations and reverses them in order. A design tool keeps a history of state snapshots. A version control system stores diffs that can be reverted. These patterns share a common property: the system has complete control over the state that was modified. All changes happened within the application's boundary. Reversal is a matter of restoring previous internal state.

AI agents shatter this assumption because they modify external state. They send emails that have been read. They book reservations that appear in another company's system. They post messages that colleagues have already seen. They write to databases that other services depend on. They make API calls to third-party systems with their own state machines and consistency guarantees. You cannot undo an email by deleting it from your outbox — the recipient has already read it. You cannot undo a calendar event by removing it from your calendar — the other attendees still see it on theirs. The undo boundary extends beyond your system into the real world, and the real world does not have a command-z.

The undo boundary extends beyond your system into the real world, and the real world does not have a command-z. This is the fundamental engineering challenge of agentic AI.

Event Sourcing for Agent Actions

The architectural foundation of reversible AI actions is event sourcing — a pattern from distributed systems where every state change is recorded as an immutable event. Instead of storing only the current state, you store the complete sequence of events that produced the current state. This gives you a full history that can be replayed, inspected, and selectively reversed.

For AI agents, this means every action the agent takes is recorded as a structured event: what was done, why it was done (the agent's reasoning), what system was affected, what the previous state was, what the new state is, and what the inverse operation would be (if one exists). This event log is not optional logging or nice-to-have observability — it is the primary data structure that makes undo possible.

The event schema for an agent action looks different from traditional event sourcing because it must capture the agent's decision context. A traditional event might record 'calendar event created at 2:00 PM March 26.' An agent event records 'calendar event created at 2:00 PM March 26, because user said next Thursday, interpreted as March 26 based on current date of March 19, confidence 0.78, alternative interpretation April 2 rejected because agent defaulted to nearest matching date.' The reasoning chain is essential for undo because it lets the user — or a supervisory system — identify where the error originated and determine the correct reversal.

Compensating Transactions: Undo for the Real World

When a state change cannot be literally reversed — the email was sent, the meeting was attended, the database record was consumed by a downstream service — the architectural pattern is a compensating transaction. Instead of undoing the original action, you perform a new action that neutralizes its effect.

A compensating transaction for a wrongly sent email is not un-sending (impossible) — it is sending a follow-up correction. For a wrongly booked reservation, it is cancelling the wrong reservation and rebooking the correct one. For a wrongly modified database record, it is writing a correction record that supersedes the original. The compensation does not restore the exact prior state — it moves to a new state that accounts for and corrects the error.

Engineering compensating transactions for AI agents requires pre-defining the compensation strategy for every action type the agent can perform. Before you give an agent the ability to send an email, you must define what the compensating transaction is. Before you let an agent modify a database, you must define the correction pattern. This is constraint engineering — bounding the agent's capabilities to the set of actions that have defined compensation paths. If an action has no viable compensation strategy, the agent should not perform it autonomously.

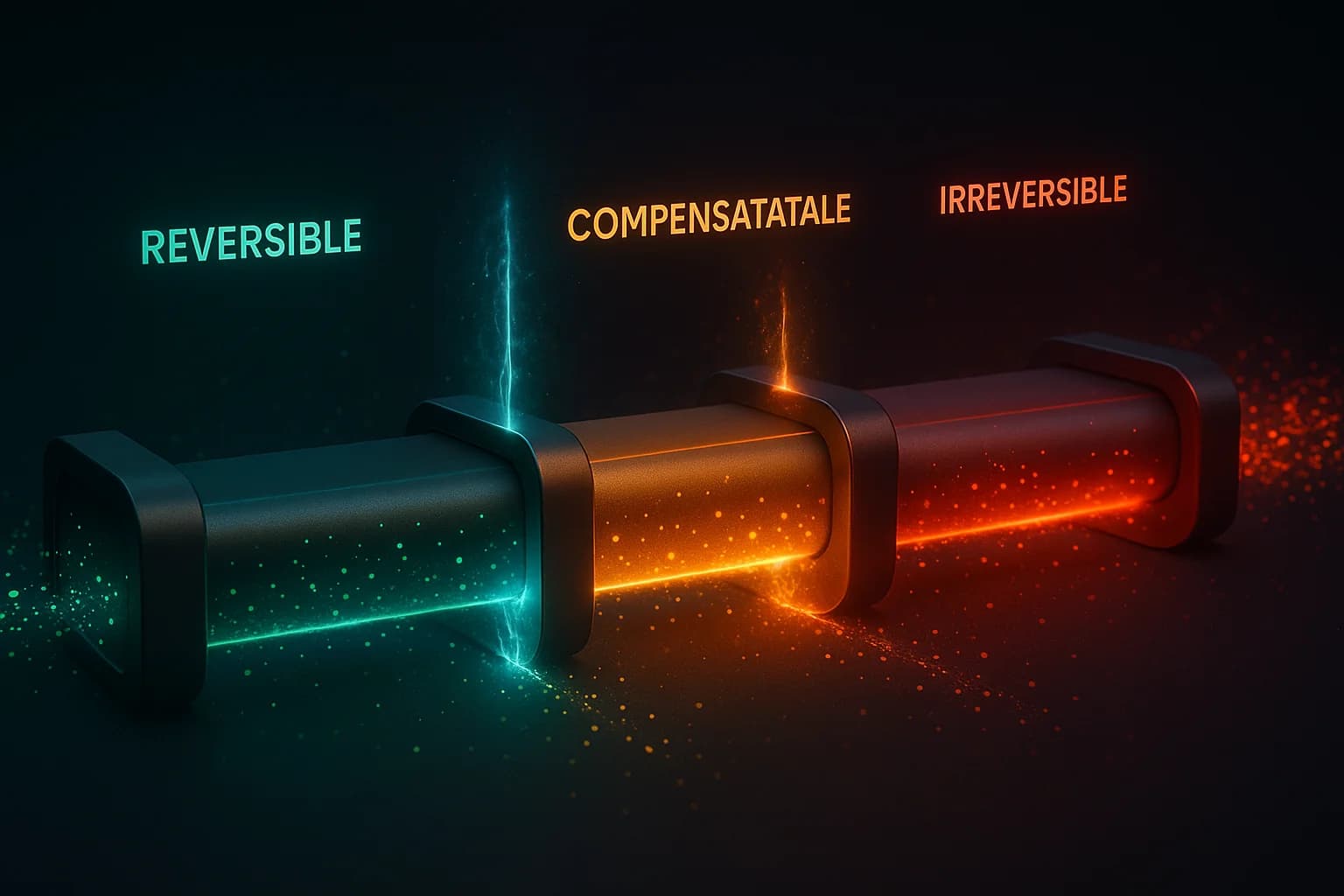

- Reversible actions: can be literally undone (delete a draft, remove a calendar hold, revert a file change) — implement direct reversal

- Compensatable actions: cannot be undone but can be corrected (email sent — send correction, booking made — rebook correctly) — implement compensating transactions

- Observable-only actions: external effects that cannot be compensated (published post seen by thousands, physical item shipped) — require pre-execution confirmation, never automate fully

- Cascading actions: trigger downstream effects in other systems (creating an order triggers fulfillment, payment, notification) — implement checkpoint-based undo that unwinds the cascade

The Checkpoint Pattern

For multi-step agent workflows — where the agent performs a sequence of actions to achieve a goal — the checkpoint pattern provides structured undo points. Before each irreversible or high-impact step, the agent creates a checkpoint: a snapshot of the current state across all affected systems, a description of what is about to happen, and the compensation strategy if the user wants to reverse after this point.

The UX implication is that agent workflows should surface checkpoints as natural pause points. Instead of running a ten-step workflow silently and presenting the final result, show the user key checkpoints and let them approve, modify, or abort before the agent proceeds. The checkpoint is not a generic confirmation dialog — it is a state-aware decision point that shows exactly what has been done, what is about to happen, and what the cost of reversal will be at each stage.

Consider a travel booking agent. Step 1: search for flights (reversible — nothing booked yet). Step 2: hold a seat (reversible — holds expire automatically). Step 3: enter payment details (not yet charged — still reversible). Step 4: confirm purchase (irreversible — charged, ticket issued). The checkpoint belongs before Step 4, with full visibility into what was selected and a clear indication that this step crosses the irreversibility boundary. The user can undo steps 1-3 trivially. Step 4 requires the compensating transaction of cancellation, which may involve fees.

Designing the Undo Experience

The engineering architecture must surface through the UX. Users need to understand three things about every AI action: what the agent did, whether it can be undone, and what undoing it will cost (time, money, social friction). The worst pattern is a generic 'Undo' button that may or may not work depending on the action — users cannot predict the result of clicking it.

The better pattern is contextual undo with explicit consequences. When an AI agent performs an action, show a transient notification with: a plain-language description of the action ('Moved your Tuesday meeting to Wednesday 3 PM'), the undo option with explicit consequences ('Undo — will notify 4 attendees of the change'), and a time window ('Undo available for 30 seconds before calendar notifications are sent'). The undo is not a mystery button — it is a fully transparent reversal with stated costs.

An undo button without stated consequences is a trust liability. Users need to know not just that they can undo, but what undoing will cost — in time, money, and social friction.

For complex multi-step actions, provide a timeline view showing each step, its reversibility status, and the current undo boundary. Users should be able to selectively undo steps within a workflow — not just all-or-nothing. The travel agent example: let the user keep the flight but undo the hotel. Let them keep the hotel but change the dates. Granular undo respects the user's intent more faithfully than binary undo/redo.

Implementation Checklist

- Catalog every action type your AI agent can perform. Classify each as reversible, compensatable, or observable-only

- For reversible actions, implement direct undo by storing the inverse operation in the event log

- For compensatable actions, pre-define and test the compensating transaction for each action type

- For observable-only actions, require explicit user confirmation before execution — never automate these

- Implement event sourcing for all agent actions with full reasoning chain capture

- Add checkpoint gates before irreversible actions in multi-step workflows

- Design contextual undo notifications with explicit consequence descriptions and time windows

- Build a timeline view for complex workflows that shows step-level undo granularity

- Test undo paths as rigorously as you test happy paths — a broken undo is worse than no undo because it creates false confidence

Key Takeaways

- Traditional undo (state rollback) fails for AI agents because agents modify external state — emails sent, bookings made, databases updated — that cannot be simply reverted

- Event sourcing with reasoning chains provides the architectural foundation: record every action, its context, and its inverse operation

- Compensating transactions handle actions that cannot be literally undone by performing corrective actions that neutralize the original error

- The checkpoint pattern creates structured undo points in multi-step workflows, with increasing confirmation requirements as actions approach irreversibility

- If an action has no viable compensating transaction, the agent should not perform it autonomously — this is the fundamental safety boundary

- Undo UX must be contextual and consequence-aware: show what happened, whether it can be reversed, and what reversal will cost

The measure of an AI agent's maturity is not what it can do — it is what it can undo. The most sophisticated agent in the world is dangerous if it takes actions it cannot reverse. The most reliable agent in the world is trustworthy precisely because every action it takes has a defined path back. Building reversible AI is not a defensive engineering exercise. It is the foundation of user trust. And trust, in the agentic era, is the only competitive advantage that compounds.

AI AgentsUndo ArchitectureEvent SourcingReversibilityAgent SafetyEngineering Patterns

KEEP READING

Related Articles

Engineering & AI·22 min read

The Service Design Implications of AI Agents: What Engineers Need to Know

When AI agents become your primary users, your API is your UX. This collapse of system design and service design demands new engineering frameworks for error handling, state management, and trust.

UX & AI·24 min read

From GUI to Intent: Why Your Carefully Designed Buttons Don’t Matter Anymore

Jakob Nielsen declared the death of the GUI. When users delegate tasks to AI agents instead of clicking through your flows, the new UX battleground shifts from pixel-perfect layouts to API discoverability, data structure clarity, and autonomous action safety.